Building an agent is the easy part. Knowing whether it actually works in production is the field's biggest unsolved problem. Solo.io CEO Idit Levine put it plainly at KubeCon Europe: organizations have frameworks for building agents, gateways for connecting them, and registries for governing them — but no consistent way to know if an agent is actually reliable enough to trust in production.

That gap is why teams ship agents that look fine in demos and collapse on real workloads. An agent that routes Kubernetes traffic correctly 80% of the time isn't an 80% solution — it's a production incident waiting to happen. Evaluation isn't a nice-to-have; it's the difference between a useful system and an expensive liability.

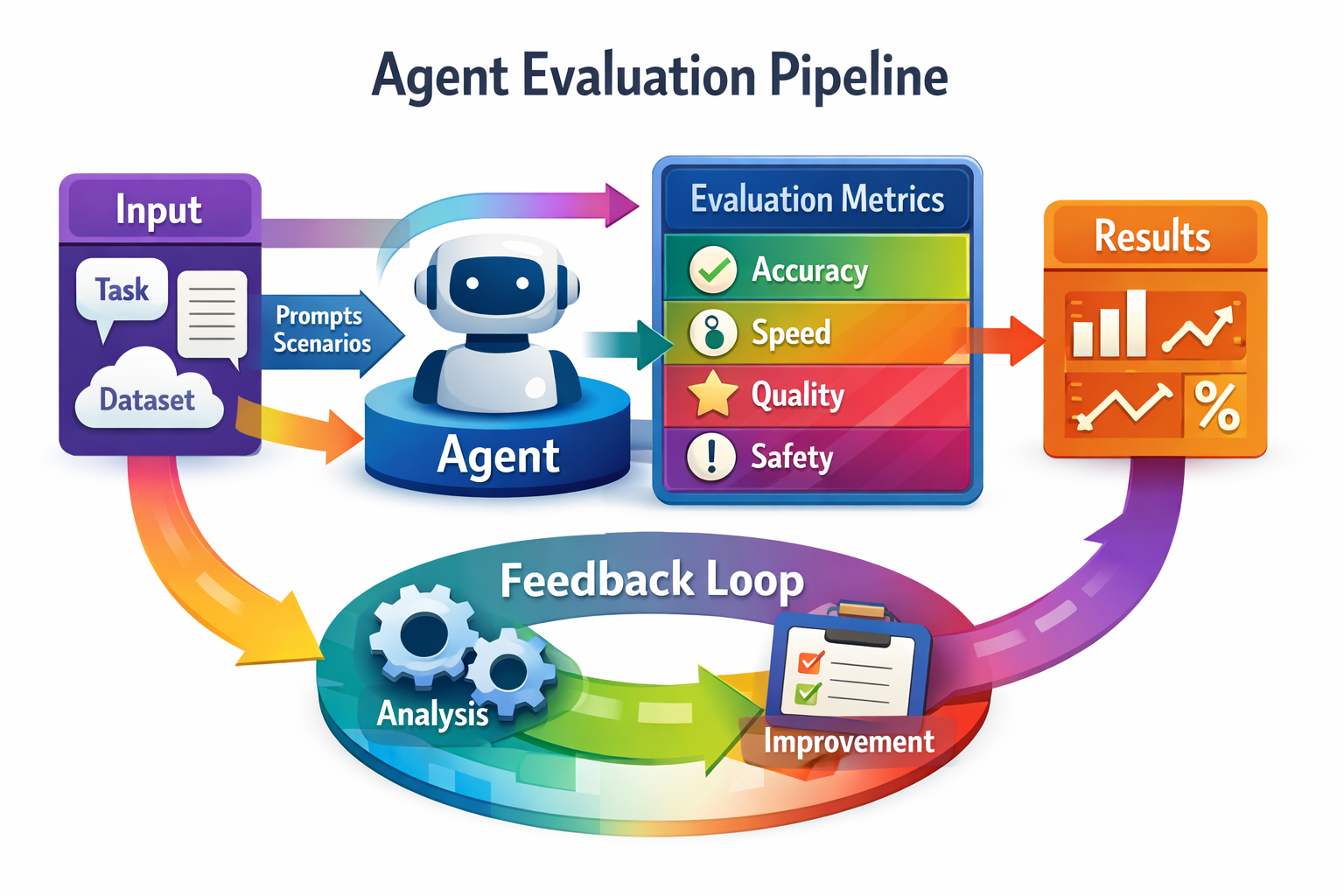

This guide covers the mental models, frameworks, and practical checklists you need to evaluate agents before they touch production traffic.

The Core Problem: What You're Actually Measuring

Most teams evaluate agents the way they evaluate static models — run a benchmark, get a number, ship. This breaks down for agents because agent performance depends on three things simultaneously: the underlying model, the scaffold wrapping it, and the task distribution you're testing on.

Research from arXiv studying 33 agent scaffolds and 70+ model configurations found that absolute score prediction degrades badly when you change the scaffold around the same model — but rank-order prediction stays stable. This asymmetry matters: you can reliably say "agent A is better than agent B" even when you can't predict their exact scores under new conditions. Build your eval process around ranking, not absolute numbers.

The second core problem is conflating two fundamentally different failure modes: accuracy failures and experience failures. The EVA framework from ServiceNow, built for voice agents but applicable broadly, makes this split explicit. An agent can reach the correct end state — book the flight, update the routing rule, answer the question — while fabricating a policy detail, misreading a confirmation code, or overwhelming the user with information they can't process. Task completion is a necessary but insufficient signal.

No current agent configuration dominates both accuracy and experience simultaneously. That means you need to decide upfront which failure mode is more acceptable for your specific use case, and design your eval accordingly.

Layer 1: Task Completion and Faithfulness

Start with the basics. For every scenario you test, you need two deterministic checks:

Did the agent reach the correct end state? Compare the expected final state of your system — database record, API response, file content — against the actual result after the agent ran. This should be code-based, not LLM-as-judge. Binary pass/fail is fine here; partial credit scoring adds complexity without much signal at this layer.

Did the agent stay grounded? Log every tool call the agent made, every piece of context it retrieved, and every claim it made in its response. Then diff claims against tool outputs and provided context. Hallucinated policy details, fabricated data points, and invented tool results are invisible to task completion checks but directly harm users. This is where LLM-as-judge earns its place — scoring faithfulness across a conversation is genuinely hard to do with regex.

A few practical implementation notes:

- Store tool call logs and final outputs for every eval run. You can't debug regressions you didn't log.

- For multi-step agents, log intermediate states too — not just the final output. A nanobot-style agent loop that iterates up to 40 times gives you 40 potential failure points, and the failure is rarely at step 40.

- Separate your faithfulness judge from the model you're evaluating. Using the same provider for both introduces systematic bias — the judge will tend to favor outputs that match its own style.

Layer 2: Behavioral Consistency

Single-run pass/fail is misleading. The metric that actually predicts production reliability is pass^k — the probability that the agent succeeds on all k runs of the same scenario.

EVA's methodology reports both pass@3 (succeeds at least once in three tries — peak capability) and pass^3 (succeeds all three times — actual reliability). The gap between these two numbers across all tested configurations was substantial. Agents that look capable on pass@3 often have consistency problems that only show up in pass^3.

For your own eval, three runs per scenario is a reasonable minimum. More is better for high-stakes tasks. The scenarios where your pass@3 and pass^3 diverge most are your highest-priority debugging targets — they indicate the agent can solve the problem but can't do it reliably, which usually means prompt fragility, tool call ordering issues, or context window sensitivity.

Layer 3: Benchmark Efficiency

Running full eval suites is expensive. Every scenario requires interactive rollouts with tool calls and multi-step reasoning — you can't batch-evaluate agents the way you evaluate classifiers.

The arXiv benchmarking paper proposes a practical optimization borrowed from Item Response Theory: filter to tasks with 30–70% historical pass rates. Tasks below 30% are too hard for any current agent to differentiate on. Tasks above 70% are too easy — every agent passes, so they add no ranking signal. The middle band, where agents actually diverge, is where your eval budget should go.

This filter reduces the number of required evaluation tasks by 44–70% while maintaining high rank fidelity. It outperforms random sampling (which has high variance across seeds) and greedy task selection under distribution shift.

Practical implementation: maintain a task library with historical pass rates per agent type. Tag each task with its difficulty tier. For rapid iteration — A/B testing a prompt change, comparing two model versions — run only the mid-difficulty subset. For release decisions, run the full suite.

Layer 4: Multi-Step and Multi-Hop Reliability

Single-turn eval misses the failure mode that breaks most production agents: hop-count degradation. As reasoning chains grow longer and tool calls accumulate, agents lose track of the original goal, contradict earlier steps, or get stuck in loops.

EVA identified multi-step workflows as the dominant complexity breaker across all tested configurations. In their airline domain, rebooking a flight while preserving ancillary services — seat assignments, baggage — was the task that separated capable agents from production-ready ones. The failure pattern was consistent: agents that handled each sub-task correctly in isolation failed to maintain constraint satisfaction across the full workflow.

Test this explicitly. Design scenarios that require at least 3–5 sequential tool calls where later steps depend on earlier ones. Measure not just whether the final state is correct, but whether the agent maintained coherent state across the full chain. Log every intermediate tool call result and check for contradictions.

- For self-improving agents — systems like Meta's DGM-Hyperagents that modify their own code to improve — this layer is especially critical. These systems can learn to game simple completion metrics by optimizing for the score rather than the actual task. The EVA finding applies here too: you need evaluation that's harder to fool than your agent is clever. Topical distractors, adversarial inputs, and compound requests are your friends.

Layer 5: Infrastructure and Observability

- For agents operating on real infrastructure — the use case Solo.io's agentevals was designed for — evaluation needs to go beyond correctness into operational reliability.

Agentevals simulates multi-step infrastructure workflows: configuring microservices, updating routing policies, troubleshooting Kubernetes clusters. Each run generates reproducible OpenTelemetry logs with latency, tool call counts, and outcome data. This lets you compare different LLM backends on the same task under controlled conditions — which matters when you're deciding whether GPT-4o or Llama 3 handles your specific workload better.

Key metrics to track at this layer:

- Latency per task type: Some agents are fast on simple tasks and slow on complex ones. Know the distribution, not just the average.

- Tool call efficiency: How many tool calls does the agent make per task? An agent that calls the same API five times when once would suffice is burning cost and latency.

- Error recovery rate: When a tool call fails or returns unexpected output, does the agent recover gracefully or spiral? Log tool errors separately and measure recovery success.

- Iteration count distribution: For ReAct-style loops, track how many iterations different task types require. A task that sometimes completes in 3 iterations and sometimes hits the 40-iteration limit is a reliability problem even if it usually succeeds.

What This Means

The practical takeaway is that agent evaluation requires a layered approach that no single framework currently covers end-to-end. You need deterministic task completion checks, faithfulness scoring, consistency measurement across multiple runs, benchmark efficiency filtering, and operational observability — and these need to be in place before you ship, not after your first production incident.

- For developers: Instrument your agent loop from day one. Log tool calls, iteration counts, intermediate states, and final outputs for every eval run. Without this data, you're debugging in the dark when something breaks in production.

- For founders: The pass@3 vs pass^3 gap is your most important reliability metric. An agent that can solve a problem is not the same as an agent that reliably solves it. Set a minimum pass^3 threshold before approving any agent for production use.

- For platform teams: Adopt the 30–70% difficulty filter for your eval suite. It dramatically reduces evaluation cost while preserving the ranking fidelity you need for model selection decisions.

- For teams building self-improving agents: Weak eval metrics get gamed. As Meta's hyperagent research demonstrates, these systems develop sophisticated optimization strategies on their own. Your benchmark needs adversarial scenarios, topical distractors, and compound tasks — not just happy-path workflows.